|

Where p = probability of an object being classified to a particular class The cost function for evaluating feature splits in a dataset is the Gini index. Gini index : The metric measures the chances or likelihood of a randomly selected data point misclassified by a particular node.Where p = probability of an element or class in the data The overall objective is to minimize entropy and have more homogeneous decision regions wherein data points belong to a similar class. More the entropy, the more complex the scenario to draw conclusions. Entropy : Entropy defines the randomness in the processed information and measures the amount of uncertainty in it.The resulting branch (sub-tree) has a better metric value than the previous tree.Ĭommonly used cost functions for varied classification and regression tasks include: In greedy methods, splitting is accomplished for all points placed in the same decision region, and successive splits are applied systematically.

Let’s understand each criterion in more detail.ĭecision trees use several metrics to decide the best feature split in a top-down greedy approach. Good decision trees address vital variables, such as deciding upon the features to split, the values of feature split, and the point at which you should stop splitting. However, understanding how these splitting conditions are devised and how many times you need to split the decision space is crucial while developing such tree-based solutions. Tree-based methods apply if-else conditions on features by doing orthogonal splits to build decision regions. The model draws accurate conclusions about the sample’s target value (represented via leaves) by considering observations of the sample population (illustrated via branches). On the other hand, in regression problems, the target variable takes up continuous values (real numbers), and the tree models are used to forecast outputs for unseen data.ĭecision trees using a predictive modeling approach are widely used for machine learning and data mining.

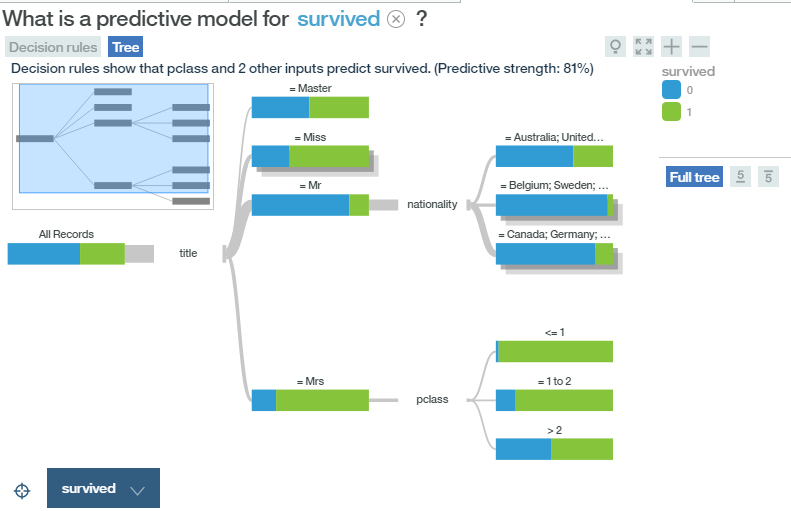

In classification problems, the tree models categorize or classify an object by using target variables holding discrete values. In a decision tree, each internal node represents a test on a feature of a dataset (e.g., result of a coin flip – heads / tails), each leaf node represents an outcome ( e.g., decision after simulating all features), and branches represent the decision rules or feature conjunctions that lead to the respective class labels.ĭecision trees are widely used to resolve classification and regression tasks. Such a tree is constructed via an algorithmic process (set of if-else statements) that identifies ways to split, classify, and visualize a dataset based on different conditions.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed